Installing Puppet Master and Agents on Multiple VM Using Vagrant and VirtualBox

Automatically provision multiple VMs with Vagrant and VirtualBox. Automatically install, configure, and test Puppet Master and Puppet Agents on those VMs.

Introduction

Note this post and accompanying source code was updated on 12/16/2014 to v0.2.1. It contains several improvements to improve and simplify the install process.

Puppet Labs’ Open Source Puppet Agent/Master architecture is an effective solution to manage infrastructure and system configuration. However, for the average System Engineer or Software Developer, installing and configuring Puppet Master and Puppet Agent can be challenging. If the installation doesn’t work properly, the engineer’s stuck troubleshooting, or trying to remove and re-install Puppet.

A better solution, automate the installation of Puppet Master and Puppet Agent on Virtual Machines (VMs). Automating the installation process guarantees accuracy and consistency. Installing Puppet on VMs means the VMs can be snapshotted, cloned, or simply destroyed and recreated, if needed.

In this post, we will use Vagrant and VirtualBox to create three VMs. The VMs will be build from a Ubuntu 14.04.1 LTS (Trusty Tahr) Vagrant Box, previously on Vagrant Cloud, now on Atlas. We will use a single JSON-format configuration file to build all three VMs, automatically. As part of the Vagrant provisioning process, we will run a bootstrap shell script to install Puppet Master on the first VM (Puppet Master server) and Puppet Agent on the two remaining VMs (agent nodes).

Lastly, to test our Puppet installations, we will use Puppet to install some basic Puppet modules, includingntp and git on the server, and ntp, git, Docker and Fig, on the agent nodes.

All the source code this project is on Github.

Vagrant

To begin the process, we will use the JSON-format configuration file to create the three VMs, using Vagrant and VirtualBox.

{

"nodes": {

"puppet.example.com": {

":ip": "192.168.32.5",

"ports": [],

":memory": 1024,

":bootstrap": "bootstrap-master.sh"

},

"node01.example.com": {

":ip": "192.168.32.10",

"ports": [],

":memory": 1024,

":bootstrap": "bootstrap-node.sh"

},

"node02.example.com": {

":ip": "192.168.32.20",

"ports": [],

":memory": 1024,

":bootstrap": "bootstrap-node.sh"

}

}

}

The Vagrantfile uses the JSON-format configuration file, to provision the three VMs, using a single ‘vagrant up‘ command. That’s it, less than 30 lines of actual code in the Vagrantfile to create as many VMs as we need. For this post’s example, we will not need to add any port mappings, which can be done from the JSON configuration file (see the READM.md for more directions). The Vagrant Box we are using already has the correct ports opened.

If you have not previously used the Ubuntu Vagrant Box, it will take a few minutes the first time for Vagrant to download the it to the local Vagrant Box repository.

# vi: set ft=ruby :

# Builds Puppet Master and multiple Puppet Agent Nodes using JSON config file

# Author: Gary A. Stafford

# read vm and chef configurations from JSON files

nodes_config = (JSON.parse(File.read("nodes.json")))['nodes']

VAGRANTFILE_API_VERSION = "2"

Vagrant.configure(VAGRANTFILE_API_VERSION) do |config|

config.vm.box = "ubuntu/trusty64"

nodes_config.each do |node|

node_name = node[0] # name of node

node_values = node[1] # content of node

config.vm.define node_name do |config|

# configures all forwarding ports in JSON array

ports = node_values['ports']

ports.each do |port|

config.vm.network :forwarded_port,

host: port[':host'],

guest: port[':guest'],

id: port[':id']

end

config.vm.hostname = node_name

config.vm.network :private_network, ip: node_values[':ip']

config.vm.provider :virtualbox do |vb|

vb.customize ["modifyvm", :id, "--memory", node_values[':memory']]

vb.customize ["modifyvm", :id, "--name", node_name]

end

config.vm.provision :shell, :path => node_values[':bootstrap']

end

end

end

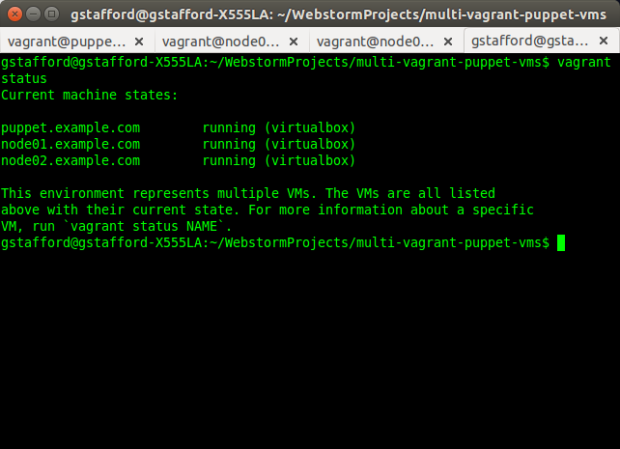

Once provisioned, the three VMs, also referred to as ‘Machines’ by Vagrant, should appear, as shown below, in Oracle VM VirtualBox Manager.

Vagrant Machines in VM VirtualBox Manager

The name of the VMs, referenced in Vagrant commands, is the parent node name in the JSON configuration file (node_name), such as, ‘vagrant ssh puppet.example.com‘.

Vagrant Machine Names

Bootstrapping Puppet Master Server

As part of the Vagrant provisioning process, a bootstrap script is executed on each of the VMs (script shown below). This script will do 98% of the required work for us. There is one for the Puppet Master server VM, and one for each agent node.

#!/bin/sh

# Run on VM to bootstrap Puppet Master server

if ps aux | grep "puppet master" | grep -v grep 2> /dev/null

then

echo "Puppet Master is already installed. Exiting..."

else

# Install Puppet Master

wget https://apt.puppetlabs.com/puppetlabs-release-trusty.deb && \

sudo dpkg -i puppetlabs-release-trusty.deb && \

sudo apt-get update -yq && sudo apt-get upgrade -yq && \

sudo apt-get install -yq puppetmaster

# Configure /etc/hosts file

echo "" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "# Host config for Puppet Master and Agent Nodes" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "192.168.32.5 puppet.example.com puppet" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "192.168.32.10 node01.example.com node01" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "192.168.32.20 node02.example.com node02" | sudo tee --append /etc/hosts 2> /dev/null

# Add optional alternate DNS names to /etc/puppet/puppet.conf

sudo sed -i 's/.*\[main\].*/&\ndns_alt_names = puppet,puppet.example.com/' /etc/puppet/puppet.conf

# Install some initial puppet modules on Puppet Master server

sudo puppet module install puppetlabs-ntp

sudo puppet module install garethr-docker

sudo puppet module install puppetlabs-git

sudo puppet module install puppetlabs-vcsrepo

sudo puppet module install garystafford-fig

# symlink manifest from Vagrant synced folder location

ln -s /vagrant/site.pp /etc/puppet/manifests/site.pp

fi

There are a few last commands we need to run ourselves, from within the VMs. Once the provisioning process is complete, ‘vagrant ssh puppet.example.com‘ into the newly provisioned Puppet Master server. Below are the commands we need to run within the ‘puppet.example.com‘ VM.

sudo service puppetmaster status # test that puppet master was installed

sudo service puppetmaster stop

sudo puppet master --verbose --no-daemonize

# Ctrl+C to kill puppet master

sudo service puppetmaster start

sudo puppet cert list --all # check for 'puppet' cert

According to Puppet’s website, ‘these steps will create the CA certificate and the puppet master certificate, with the appropriate DNS names included.‘

Bootstrapping Puppet Agent Nodes

Now that the Puppet Master server is running, open a second terminal tab (‘Shift+Ctrl+T‘). Use the command, ‘vagrant ssh node01.example.com‘, to ssh into the new Puppet Agent node. The agent node bootstrap script should have already executed as part of the Vagrant provisioning process.

#!/bin/sh

# Run on VM to bootstrap Puppet Agent nodes

# http://blog.kloudless.com/2013/07/01/automating-development-environments-with-vagrant-and-puppet/

if ps aux | grep "puppet agent" | grep -v grep 2> /dev/null

then

echo "Puppet Agent is already installed. Moving on..."

else

sudo apt-get install -yq puppet

fi

if cat /etc/crontab | grep puppet 2> /dev/null

then

echo "Puppet Agent is already configured. Exiting..."

else

sudo apt-get update -yq && sudo apt-get upgrade -yq

sudo puppet resource cron puppet-agent ensure=present user=root minute=30 \

command='/usr/bin/puppet agent --onetime --no-daemonize --splay'

sudo puppet resource service puppet ensure=running enable=true

# Configure /etc/hosts file

echo "" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "# Host config for Puppet Master and Agent Nodes" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "192.168.32.5 puppet.example.com puppet" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "192.168.32.10 node01.example.com node01" | sudo tee --append /etc/hosts 2> /dev/null && \

echo "192.168.32.20 node02.example.com node02" | sudo tee --append /etc/hosts 2> /dev/null

# Add agent section to /etc/puppet/puppet.conf

echo "" && echo "[agent]\nserver=puppet" | sudo tee --append /etc/puppet/puppet.conf 2> /dev/null

sudo puppet agent --enable

fi

Run the two commands below within both the ‘node01.example.com‘ and ‘node02.example.com‘ agent nodes.

sudo service puppet status # test that agent was installed

sudo puppet agent --test --waitforcert=60 # initiate certificate signing request (CSR)

The second command above will manually start Puppet’s Certificate Signing Request (CSR) process, to generate the certificates and security credentials (private and public keys) generated by Puppet’s built-in certificate authority (CA). Each Puppet Agent node must have it certificate signed by the Puppet Master, first. According to Puppet’s website, “Before puppet agent nodes can retrieve their configuration catalogs, they need a signed certificate from the local Puppet certificate authority (CA). When using Puppet’s built-in CA (that is, not using an external CA), agents will submit a certificate signing request (CSR) to the CA Puppet Master and will retrieve a signed certificate once one is available.”

Agent Node Starting Puppet’s Certificate Signing Request (CSR) Process

Back on the Puppet Master Server, run the following commands to sign the certificate(s) from the agent node(s). You may sign each node’s certificate individually, or wait and sign them all at once. Note the agent node(s) will wait for the Puppet Master to sign the certificate, before continuing with the Puppet Agent configuration run.

sudo puppet cert list # should see 'node01.example.com' cert waiting for signature

sudo puppet cert sign --all # sign the agent node certs

sudo puppet cert list --all # check for signed certs

Puppet Master Completing Puppet’s Certificate Signing Request (CSR) Process

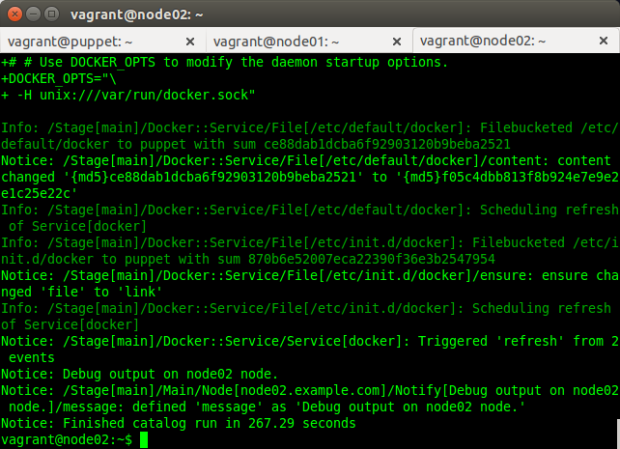

Once the certificate signing process is complete, the Puppet Agent retrieves the client configuration from the Puppet Master and applies it to the local agent node. The Puppet Agent will execute all applicable steps in thesite.pp manifest on the Puppet Master server, designated for that specific Puppet Agent node (ie.’node node02.example.com {...}‘).

Configuration Run Completed on Puppet Agent Node

Below is the main site.pp manifest on the Puppet Master server, applied by Puppet Agent on the agent nodes.

node default {

# Test message

notify { "Debug output on ${hostname} node.": }

include ntp, git

}

node 'node01.example.com', 'node02.example.com' {

# Test message

notify { "Debug output on ${hostname} node.": }

include ntp, git, docker, fig

}

That’s it! You should now have one server VM running Puppet Master, and two agent node VMs running Puppet Agent. Both agent nodes should have successfully been registered with Puppet Master, and configured themselves based on the Puppet Master’s main manifest. Agent node configuration includes installing ntp, git, Fig, and Docker.